Today, physicians spend about 49% of their workday documenting clinical visits, which impacts physician productivity and patient care. Did you know that for every eight hours that office-based physicians have scheduled with patients, they spend more than five hours in the EHR? As a consequence, healthcare practitioners exhibit a pronounced inclination towards conversational intelligence solutions, wherein the doctor-patient dialogue is automatically transcribed during consultations and subsequently synthesized into clinical documentation utilizing artificial intelligence (AI) technology, thereby facilitating time-efficient processes.

The Live Meeting Assistant (LMA) for healthcare solution is built using the power of generative AI and Amazon Transcribe, enabling real-time assistance and automated generation of clinical notes during virtual patient encounters. LMA was originally developed as a solution for real-time transcription and note taking during virtual meetings, as described in the launch blog post. LMA for healthcare is an extended version of the Live Meeting Assistant solution that has been adapted to generate clinical notes automatically during virtual doctor-patient consultations. The solution captures speaker audio and metadata directly from your browser-based meeting application (currently compatible with Zoom and Chime, with others coming), and audio from other browser-based meeting tools, softphones, or other audio input. It then accurately converts speech to text with Amazon Transcribe, and uses foundation models (FMs) from Amazon Bedrock to generate tailored clinical notes in real-time.

The LMA for healthcare helps healthcare professionals to provide personalized recommendations, enhancing the quality of care. By using the solution, clinicians don’t need to spend additional hours documenting patient encounters. Automated transcription of conversations, coupled with state of the art (SOTA) large language models (LLMs), enables the generation of draft clinical notes for EHRs or other downstream systems. It alleviates the documentation burden for clinicians as they can commence with a preliminary draft, eliminating the need to write from scratch, and simply review and make necessary amendments. This gives healthcare professionals more time to focus more on patient care and reduces the risk of clinician burnout.

We invite you to explore the following demo, which showcases the LMA for healthcare in action using a simulated patient interaction.

What are the differences between AWS HealthScribe and the LMA for healthcare?

AWS HealthScribe is a fully managed API-based service that generates preliminary clinical notes offline after the patient’s visit, intended for application developers. It has been robustly tested against datasets to minimize hallucination and ensure that each sentence in the summaries is linked to the original transcript through evidence mapping, which is crucial for efficient review and accuracy validation.

LMA for healthcare is an open source end-to-end application layer solution that acts as a virtual assistant for clinicians, boosting productivity and alleviating administrative burdens, including but not limited to clinical documentation. It uses many AWS services focused on providing a real time transcription and generative AI experience out of the box, and can be used as is, customized as needed, and adapted to create bespoke features and integrations. While LMA offers flexibility using underlying AWS services such as Amazon Bedrock, ensuring accuracy, reducing hallucinations, and providing evidence mapping requires additional effort compared to the pre-built robustness provided by AWS HealthScribe. In the future, we expect LMA for healthcare to use the AWS HealthScribe API in addition to other AWS services.

Solution overview

Everything you need is provided as open source in our GitHub repo and is straightforward to deploy in your AWS account. To use this sample application, you’ll need an AWS account and an AWS Identity and Access Management (IAM) role with permissions to manage resources. If you don’t have an AWS account yet, you can create one following the instructions in How do I create and activate a new AWS account?

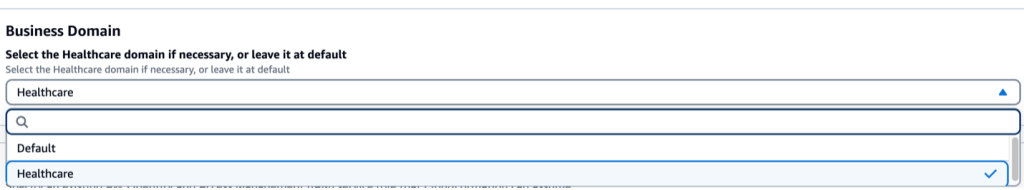

Follow the instructions in Deploy the solution using AWS CloudFormation in this LMA blog post to start deploying the solution. To deploy the LMA for healthcare, select Healthcare from the dropdown menu as your domain.

The LMA blog post covers deployment steps, including downloading and installing the Chrome browser extension, initiating LMA usage, process flow, monitoring and troubleshooting procedures, cost evaluation, and customization options for your deployment.

This blog post focuses on the Amazon Transcribe LMA solution for the healthcare domain. The Live Meeting Assistant (LMA) for healthcare facilitates efficient documentation following patient visits. It automatically generates comprehensive post-call summaries, highlights key topics discussed between the doctor or clinician and the patient, and presents clinical notes in structured formats like SOAP (Subjective, Objective, Assessment, Plan) and BIRP (Behavior, Intervention, Response, Plan). It can also summarize ongoing discussions, identify key topics mentioned, and list patient’s symptoms as they come up during the conversation using the meeting assist bot.

By choosing ASK ASSISTANT, the healthcare professional can prompt the meeting assistant, which taps into an Amazon Bedrock knowledge base (if enabled), to propose suitable responses based on the recent meeting interactions captured in the transcript. Prompting is a technique used in natural language processing (NLP) and language models to provide context or guidance to the model, allowing it to generate relevant and coherent output.

Amazon Bedrock knowledge base allows you to consolidate various data sources into a centralized information repository. This feature enables you to create applications that use Retrieval-Augmented Generation (RAG), a technique where information retrieval from data sources enhances the model’s response generation. With the LMA, you have the option to integrate with an Amazon Bedrock knowledge base and provide your organization’s data. Additionally, the Bedrock knowledge base can even crawl external websites, allowing it to look up relevant information in the context of the conversation during patient visits. e.g., CDC website

In the following example, research documents related to social anxiety are added to the Amazon Bedrock knowledge base. This allows you to refer to the information during live patient interaction. To activate the assistant, say “Okay, Assistant,” choose the ASK ASSISTANT! button, or enter your own question in the UI. In the following figure, we asked the assistant to share research papers on social anxiety from the set of documents we provided as input to the knowledge base during setup.

As you can see in the preceding figure, the meeting assist bot successfully answered the question asked during the live call: “Okay, Assistant is there any case study reference on social anxiety?” The bot provided a relevant response by citing a source from the Amazon Simple Storage Service (Amazon S3) bucket where the reference documents are stored.

Note: We recommend using an Amazon Bedrock knowledge base solely for information retrieval and search, not for generating direct recommendations regarding patient care.

Using an Amazon Bedrock knowledge base is optional. During patient interactions without it, you can direct general inquiries to the LLM. In such cases, the LLM will use its inherent knowledge and capabilities to provide relevant responses without relying on your specific data.

The LMA solution is flexible and customizable. Healthcare professionals can add additional prompts or customize existing ones, allowing the LMA to generate output tailored to their specific requirements. This feature allows you to adapt the LMA solution with the unique documentation workflows and preferences of different healthcare settings across the globe. Follow the instructions to see how to update the existing prompt templates or add additional prompts based on your specific requirements.

Additionally, if you’re interested in creating your own tailored version of the LMA for other domains, see the developer README.

Common clinical documentation formats

Let’s start by examining the common clinical document formats used by healthcare professionals such as doctors and clinicians. These formats are intended to aid in documenting patient visits, capturing the patient’s concerns, examination findings, diagnostic assessments, and treatment plans. Some of the widely used clinical note formats are SOAP, BIRP, DAP (Data, Assessment, Plan), and GIRP (Goal, Intervention, Response, Plan).

The SOAP note is written after patient consultations or therapy sessions and might look like the following:

S (Subjective):

Patient is a 65-year-old male presenting with complaints of fatigue and shortness of breath for the past 2 weeks. He denies chest pain, cough, or fever.

O (Objective):

Vital Signs: BP 142/88, HR 92, RR 18, Temp 98.6°F

Physical Exam: Bilateral crackles at lung bases, trace pitting edema in lower extremities, JVD present

Labs: BNP 550 pg/mL

A (Assessment):

Congestive Heart Failure, decompensated

P (Plan):

Initiate furosemide 40 mg daily

Add lisinopril 10 mg daily

Lifestyle modification – salt restriction, daily weight monitoring

Follow up in 1 week

Obtain echocardiogram as outpatient

Generated using Anthropic Claude 3 Sonnet v1 model using Amazon Bedrock

In the subjective part, you capture the patient’s concerns and medical history, whereas the objective section focuses on measurable data such as vital signs and test results. The assessment section examines the gathered information for potential diagnoses. Finally, the plan outlines the treatment strategy, medications, follow-up instructions, referrals, and any additional tests or procedures.

While SOAP notes are widely used, the BIRP format has gained popularity, especially in mental and behavioral health settings. It emphasizes a patient-centered approach, taking into account the individual’s personal, social, and cultural backgrounds and the impact of these backgrounds on their health and treatment plan. The following is an example of a BIRP note:

B (Behavior):

Patient is a 32-year-old female presenting with symptoms of anxiety and depression. She reports feeling overwhelmed, having difficulty sleeping, and a lack of motivation. Patient states her anxiety and low mood have been impacting her work performance and relationships.

I (Intervention):

Engaged patient in cognitive-behavioral therapy (CBT) techniques, including identifying negative thought patterns and developing coping strategies. Explored possible triggers and stressors contributing to her symptoms. Provided psychoeducation on anxiety and depression.

R (Response):

Patient was receptive to the CBT interventions and was able to identify some irrational thoughts. She expressed a willingness to practice the coping techniques discussed. Patient reported feeling somewhat relieved after processing her thoughts and emotions during the session.

P (Plan):

Continue CBT sessions weekly

Consider adding pharmacotherapy if symptoms persist

Recommend exercise, mindfulness practices, and stress management techniques

Encourage involvement in social activities and support system

Follow up in 2 weeks

Generated using Anthropic Claude 3 Sonnet v1 model using Amazon Bedrock

The BIRP note focuses on the patient’s behaviors and symptoms, the specific interventions used during the session, the patient’s response to those interventions, and the collaborative treatment plan going forward.

The LMA for the healthcare domain offers a powerful feature to automatically generate structured clinical notes in SOAP and BIRP format. Moreover, the LMA offers flexibility to accommodate additional clinical note formats based on your specific requirements. You can configure the LMA for healthcare to generate notes in formats such as DAP or GIRP, or even customize your own preferred note structure. This versatility ensures that the LMA seamlessly integrates with the existing documentation practices of different healthcare settings.

Prompts for common clinical documentation formats

A prompt serves as the initial text or context provided to the LLM to produce coherent and relevant output. The LMA solution comes with pre-built prompts such as summary generation, capturing meeting details, and SOAP and BIRP notes generation. Additionally, for the meeting assist bot, there are prompts like key topic detection, list patient symptoms, and so on. These healthcare specific prompts are automatically enabled when you chose Healthcare as the value for Domain when you deploy or update your LMA stack.

Let’s examine the SOAP prompt to see how it was constructed with best practices in mind and explore how you can create a custom prompt following a similar approach. You can explore the prompts in the LLMPromptHealthcareSummaryTemplate.json file. Try various prompts on your own and let us know if you get improved results.

To generate a SOAP summary, the key aspects of the LLM prompt are:

Clear structure and format: The prompt outlines the specific structure and format of a SOAP note. By providing the LLM with this well-defined structure, it ensures that the generated output follows the expected format making it easier for healthcare professionals to understand and interpret the information.

Detailed instructions: The prompt provides detailed instructions for each section of the SOAP note, guiding the LLM on what information to include in each part. For example, the Subjective section should describe the patient’s chief complaints, symptoms, and relevant history in their own words, while the Objective section should document observations, vital signs, physical examination findings, and test results.

Example SOAP note: The prompt also includes an example SOAP note, which serves as a reference for the LLM to understand the desired output format and level of detail. By providing a well-written example, the LLM can better comprehend the structure, language, and level of specificity required to generate a high-quality SOAP note.

Relevant information: The prompt instructs the LLM to base the generated SOAP note on the provided transcript, which contains relevant details about the patient’s condition, symptoms, medical history, and diagnostic test results. By having access to this information, the LLM populates the different sections of the SOAP note with the appropriate data.

Confidentiality reminder: A typical clinical note will contain personally identifiable information (PII) or protected health information (PHI) of the patient. If you want to mask or hide the information, you can prompt the LLM accordingly. As an example, the template prompt we shared reminds the LLM to maintain patient confidentiality by avoiding the use of PII or PHI in the generated output. This is an important aspect of healthcare documentation and ensures compliance with privacy regulations.

For best practices on prompting, you can consult the documentation provided by model providers. For Anthropic, you can see their documentation for detailed guidance on prompting.

Advantages

LMA for healthcare offers numerous benefits to healthcare professionals, organizations, and ultimately, patient care. Here are some key advantages:

Reduce clinical documentation time: The LMA solution can significantly reduce the time and effort required for clinical documentation by automatically generating comprehensive notes. This not only saves valuable time for healthcare professionals but also ensures consistent documentation, reducing the risk of errors or omissions. Structured clinical notes generated by the LMA can facilitate better communication and collaboration among healthcare teams and third parties. Clear and consistent documentation can help ensure seamless care transitions and enable more informed decision-making by all involved parties.

Answer questions with knowledge: The LMA can be integrated with existing knowledge bases, such as Amazon Bedrock, allowing it to provide contextual and evidence-based recommendations during live consultations or when generating clinical notes. This can support more accurate diagnoses, treatment recommendations, and decision-making processes.

Enhanced patient encounter efficiency: During live patient consultations, the ASK ASSISTANT feature can be used to surface relevant information in real time, which can help the provider accurately address patient enquiries. For example, “show me the latest drugs for societal anxiety disorder or share the latest research on depression.: This enables healthcare professionals to focus more on the patient interaction while the LMA efficiently documents the encounter, reducing the cognitive load and administrative burden.

Customization and scalability: The LMA solution is customizable, allowing healthcare organizations to tailor the prompts, language models, and knowledge bases to their specific requirements. This flexibility ensures integration and scalability across various healthcare settings and specialties.

Continuous improvement: By analyzing the LMA’s outputs and user interactions, healthcare organizations can identify areas for improvement and refine the prompting techniques, language models, and knowledge bases. This continuous learning and optimization process can lead to increasingly accurate and valuable outputs from the LMA over time.

Increased efficiency and cost savings: By automating and streamlining clinical documentation processes, the LMA can significantly reduce administrative overhead, allowing healthcare professionals to focus more on direct patient care. This increased efficiency can translate into cost savings for healthcare organizations and improved resource allocation.

Conclusion

Experience the impact of the Live Meeting Assistant for healthcare, an adaptable and personalized solution engineered to simplify your clinical note generation process in real time by yourself. By using the capabilities of Amazon AI and machine learning (ML) services in conjunction with Amazon Bedrock LLMs, this sample solution transcribes, translates, fact checks, and answers questions in real time from your knowledge base, and generates clinical notes in multiple formats. With LMA for healthcare, healthcare providers can redirect their attention to what truly matters, delivering exceptional patient care.

The sample LMA application is available as open source, offering a robust foundation for your own project. We encourage you to enhance its functionality and share your improvements by submitting fixes and features through GitHub pull requests. Visit the LMA GitHub repository to explore the code, watch the repository to stay updated on new releases, and refer to the README for the latest documentation.

For expert guidance, AWS Professional Services and other AWS Partners are ready to assist you.

We value your feedback. Share your thoughts in the comments section or use the issues forum in the LMA GitHub repository

About the authors

Wrick Talukdar is a Senior AI/ML Architect who focuses on computer vision, NLP, and generative AI. Wrick works with customers to help them understand and develop solutions to business problems with AWS Services and generative AI.

Prasad Prabhu is a Principal Product Manager at Amazon Web Services (AWS) AI/ML, where he focuses on growing AI services that drive innovation across various industries, including Healthcare, Financial services, and Media & Entertainment. With nearly two decades of experience in the tech industry, Prasad is specialized in building B2B enterprise software products and solutions, working at the intersection of business and technology.